Opinion & Analysis

Digital Data Steward — Leveraging Agentic AI for Data Quality, Metadata, Master Data Management, and Data Retention.

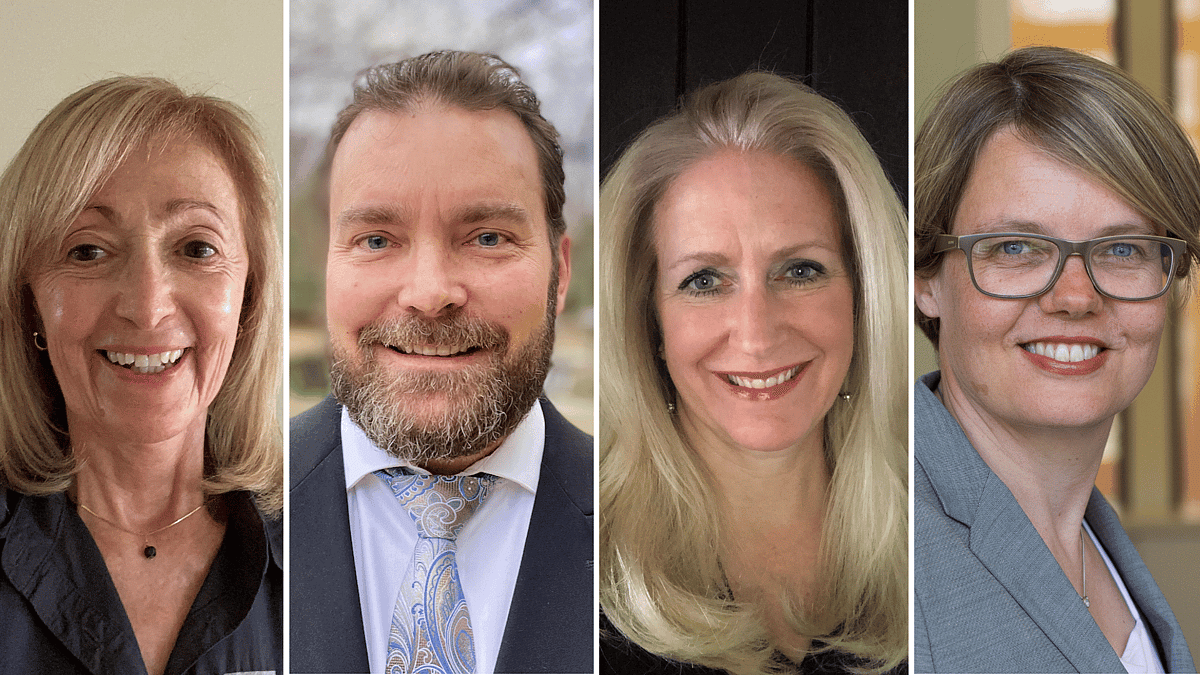

Written by: Maria C. Villar | Co-founder and Managing Partner, Business Data Leadership, Mike Alvarez | CTO and Head of Product, NeuZeit, Elizabeth Hiatt (Beth) | Head of Global Data Governance, Paypal, Christine Legner | Professor of Information Systems HEC, University of Lausanne

Updated 2:00 PM UTC, August 28, 2025

This is the third article in a four-part series exploring the transformative role of AI Agents and their potential to address persistent challenges in Data Governance. It focuses on four critical aspects of data stewardship — data quality, metadata management, master data, and data retention — and reviews what AI Agents already can do, while providing an outlook on the future.

Augmenting critical fields of data stewardship with AI agents

As data continues to grow in complexity and importance, organizations need smarter, more agile, and scalable approaches to manage it. The Digital Data Steward (DDS), powered by AI Agents, represents the next evolution in Data Management — combining the best of human expertise with the power of artificial intelligence. In this article, we deep-dive into four critical areas of data stewardship: data quality, metadata management, master data, and data retention.

These areas are critical for executing the domain data strategy (see article 2) and building upon its core input, specifically strategic themes and critical data elements (CDEs). Despite the advances in tooling, these four areas still demand considerable manual efforts and high domain knowledge from data stewards.

Today, we are already witnessing numerous examples of specialized AI agents and Large Language Models (LLMs) taking over tasks that were traditionally handled by data stewards. This article illustrates what is already possible today, and how these capabilities may be further extended with Agentic AI, bringing us closer to the vision of the Digital Data Steward (see Table 1).

We specifically propose four agentic AI systems for key data steward responsibilities:

- Data Quality Agent

- Metadata Management Agent

- Master Data Agent

- Data Retention Agent

Screenshot

Fig 1. Digital Data Steward Agent map (Zoom on Data Quality, Metadata, Master Data, and Data Retention)

Improving data accuracy, consistency and reliability with the Data Quality Agent

Data quality is a critical aspect for any digitalization and AI initiative: without accurate, consistent, and reliable data, these initiatives will ultimately fail. AI agents can significantly automate and augment many of the highly manual tasks performed by data stewards, as well as enhance the curation of data assets for operational and analytical purposes.

Many data quality tools leverage AI to perform the foundational capabilities needed to perform basic data quality tasks, such as:

- Data profiling and anomaly detection: Scanning data for outliers, missing values, or inconsistencies using ML and statistical rules.

- Automated remediation: Fixing simple issues (e.g., format correction, deduplication) automatically, while complex ones are flagged for humans.

Detection of (simple) data quality rules: Identifying basic rules, specifically for validity, completeness, uniqueness, or consistency, and straightforward business logic – as illustrated in Figure 2 for an HR dataset with rules that were detected by ChatGPT.

Figure 2: Data quality rules in an HR dataset detected by ChatGPT

While AI agents are already powerful, they are mostly focused on simple and clearly defined repetitive tasks. What is needed for our vision of the Data Quality Agent — as a component of the Digital Data Steward — is the capability for cross-agent orchestration and feedback to predict, listen, alert, and of course, correct.

The Data Quality Agent requires orchestration and collaboration of agents that are trained to perform more complex tasks and truly augment human data stewards’ capacities (see Figure 3).

Figure 3: Data Quality Agent

Example scenario for the Data Quality Agent: Imagine a financial institution deploying the Data Quality Agent to augment its human data stewards’ abilities and improve the quality of its customer data. In this scenario, the Data Quality Agent could be responsible for: Scanning vast amounts of customer data from different sources — including structured data from CRM systems as well as unstructured data from e-mails or customer interactions — to identify patterns, relationships, and anomalies.

- Clustering and grouping similar data exceptions (e.g., similar types of address errors or repeated customer record anomalies) and correcting them.

- Assisting with more sophisticated data quality root cause analysis leveraging metadata lineage, log correlation, and process mining to discover process causes.

- Pushing discovered root causes to other agents to update lineage, data contracts, or changes to processes.

- Helping create complex business rules based on input in human language. If other agents read this as input, they could then use them to create code, tests, or policies in specific systems or platforms.

- Monitoring and drafting the problem ticket and narrative report of data quality findings to trigger actions.

Providing context and meaning with the Metadata Management Agent

Metadata provides context and meaning to data, which is critical for users to discover, understand, and leverage information effectively. Similar to data quality tools, many of the metadata management tools leverage AI today in the following areas:

- Metadata extraction: Automatically determining the schema of new data sources and extracting (mostly) technical metadata.

- Continuous catalog updates: Populating and updating data catalogs, and enriching descriptions (with NLP for unstructured data).

- Automated lineage stitching: Connecting and reconciling fragmented data lineage information from multiple systems.

- Data sensitivity classification: Automatically identifying and classifying sensitive data (e.g., PII, PHI) based on its content and context, applying appropriate security policies.

A more comprehensive Metadata Management Agent – within the Digital Data Steward framework – extends and orchestrates these specialized agents to assist human data stewards in creating and consistently maintaining the data dictionaries and metadata repositories for their domain.

To provide meaningful metadata, the Metadata Management Agent needs to be familiar with the internal vocabularies and glossaries, continuously learn glossary definitions and enrichment rules from one domain, and proactively suggest them in another. This is specifically important for the various unstructured data sources — such as documents, e-mails, and reports — that feed an increasing number of LLMs and GenAI applications.

Example scenario for the Metadata Management Agent: Imagine a large e-commerce company with vast amounts of customer data. In this scenario, the Metadata Management Agent could be responsible for:

- Automatic discovery of new data sources, extracting technical metadata, inferring data schemas, and linking technical metadata to business terms based on the company-specific business vocabulary and glossaries.

- Self-healing metadata capabilities that detect, diagnose, and fix metadata drift, such as broken lineage, missing tags, and policy violations.

- Support for data discoverability and usability by translating metadata graphs into plain language that business users can understand.

Managing the lifecycle of critical data elements with the Master Data Agent

Being the most critical data objects used across the organization’s business, master data is the focus of most data steward work. Today’s MDM tools increasingly embed AI along the entire data life-cycle for:

- Data creation and enrichment: Filling in missing values or generating initial records from limited inputs (e.g., auto-generating product descriptions from specs). To do so, AI recognizes existing patterns, infers likely values, or fetches standard descriptions from external knowledge bases.

- Smart matching and deduplication: Suggesting potential duplicate records or relationships, and in some cases, automating merges with human oversight.

- Data standardization and integration from multiple source systems: Unifying data across disparate datasets and standardizing schemas (e.g., TAMR).

A Master Data Management Agent within the Digital Data Steward framework aims at making the lifecycle management of Critical Data Elements (CDEs) more automated, efficient, and reliable. This includes orchestration and collaboration of agents to manage the domain CDE’s Create, Read, Update, and Delete processes.

However, compliance checks are needed, specifically for sensitive and business-critical master data. Hence, the most business-critical and complex steps still rely on human expertise and contextual information, derived from the domain data strategy.

Compliance with data retention policies using the Data Retention Agent

Data retention becomes a critical responsibility of the data steward role, especially as organizations face increasingly complex legal, regulatory, and ethical obligations. To meet these challenges, many modern data management tools — such as enterprise data catalogs (e.g., Collibra, Microsoft Purview, Informatica) and Master Data Management tools — now embed AI-driven features to automate and enhance compliance with data retention policies:

- Automated identification of data subject to retention rule: Analyzing metadata, data classifications, and business context to identify data that falls under specific retention requirements (e.g., PII, contracts, financial records).

- Policy assignment and enforcement: Triggering deletion, anonymization, or archiving of data when retention periods expire.

Within the Digital Data Steward framework, the Data Retention Agent collaborates closely with the Metadata and Master Data Management Agents, reads the metadata, and triggers specialized agents for deletion, anonymization, or archiving. It goes beyond enforcing existing data retention policies to proactively optimize the existing data retention policies and procedures based on usage patterns.

Example scenario for the Data Retention Agent: In the healthcare industry, a Data Retention Agent can support human data stewards in data retention tasks to comply with regulations like HIPAA. The tasks include:

- Automatically identifying patient medical records based on content and metadata and classifying records based on sensitivity levels (e.g., mental health records, substance abuse records).

- Enforcing retention policies based on regulatory requirements, automatically archiving records after a certain period, and securely deleting them when they are no longer needed.

- Monitoring data access and identifying potential violations of HIPAA regulations, alerting compliance officers to investigate.

- Optimizing data retention policies and predicting when data will become inactive, and automatically moving it to archive storage to free up primary storage space.

By automating these tasks, the AI agent can help healthcare organizations reduce the risk of non-compliance, improve data security, and free up valuable resources.

Conclusion

AI agents are already transforming key data management tasks — especially data quality, metadata management, master data workflows, and data retention. The value of the Digital Data Steward as an agentic system is realized by how well it learns your data environment, how much information and training on your data ecosystem it is given, and the various data management issues encountered within your organization, whether that is through your domain data strategy, issue and incident management system, or other Internal Audit reports, etc.

Over time, the Digital Data Steward is able to understand where your company has risk and ensure that agents are deployed to protect and defend the company. Fully autonomous, general-purpose agents for complex, cross-organizational data management remain a work in progress, with most organizations adopting a “human-in-the-loop” approach for critical decisions.

The last part of our four-part series will explore what it really takes to move from a promising vision of agentic AI in data stewardship to measurable, enterprise-scale value. We’ll examine the evolving role of the Chief Data Officer and the rise of collective intelligence platforms.

We’ll also address the nuanced debate between augmentation and automation, spotlight innovation in the startup ecosystem, and provide a practical timeline and recommendations for CDOs ready to lead this transformation. If you’re wondering where to start — or how to scale — the augmented future of data stewardship, Part 4 will bring it all together.

About the Authors:

Maria C. Villar brings over 30 years of experience as a transformational technology executive, having served as Chief Data Officer in both the technology and financial sectors. Currently, she is Co-founder and Managing Partner of Business Data Leadership, a firm committed to enhancing effective data and AI management practices through training, writing, coaching, and consulting. Her expertise includes enterprise data strategy, data and AI governance, business value realization, organization and change management, and ESG and Sustainability.

Recognized as a leader in the data and AI industry, Villar is a frequent speaker and author. Her accomplishments include co-authoring the book “Managing Your Business Data from Chaos to Confidence” with Theresa Kushner, developing online master classes, e-learning modules, and webinars, contributing to “Latin Business Today” since 2010, and serving as the WLDA Ventures Program Manager for an accelerator program focused on data and AI startups.

Mike Alvarez is a data and AI transformation leader with over 20 years of experience driving innovation at the intersection of data science and commercial product development. He helps organizations unlock transformative value from their data, technology, and human resources. His career spans pioneering data leadership roles at Fortune 20 companies where he delivered hundreds of millions in business value through data/AI initiatives.

As CTO and Head of Product at NeuZeit, he is focused on accelerating the value and adoption of AI for organizations with acceleration frameworks. Alvarez is passionate about helping companies navigate their data and AI transformation journey by establishing robust data foundations, deploying scalable AI solutions, and creating platforms that democratize insights to drive competitive advantage. Mike is also a board member of the AI Freedom Alliance (https://aifalliance.org/) advocating for the fair and ethical use of Artificial Intelligence.

Elizabeth (Beth) Hiatt is Head of Global Data Governance at PayPal. She has close to 30 years of experience building and deploying enterprise-wide data management and governance programs. Beth has held various data management and governance roles across business and technology in financial services, telecommunications, and hospitality. She has implemented enterprise data management programs end-to-end, developing and enabling critical functions such as data governance, data quality, and master and metadata programs. She has deep technical expertise in enterprise data architecture, helping organizations “connect the dots” across the data lifecycle. Beth is a strong, results-driven leader with experience managing large, complex organizations specifically focusing on growing a company’s data management maturity while changing the organization’s data culture. She has written articles including “Time to Level Up: The Evolving Role of the Chief Data Officer” published by TDWI, spoken at many conferences including the Women Data Leaders Global Summit, Chief Data Officer and Information Quality (CDOIQ) Symposium and was on CDO Magazine’s Global Data Power Women List.

Christine Legner is a Professor of Information Systems at the Faculty of Business and Economics (HEC), University of Lausanne, in Switzerland. Her research fields are data management, enterprise architecture, and business software. She is the co-founder and academic director of the Competence Center Corporate Data Quality (CC CDQ), an industry-funded research consortium and expert community dedicated to advancing the field of data management. In this role, Legner leads a research team that collaborates closely with industry experts from 20 Fortune 500 companies (BASF, Bayer, Bosch, Nestlé, Schaeffler, SAP, Siemens, and Tetrapak, among others) to develop innovative concepts, tools and methods for data management.

Together with Dr. Richard Wang, Legner also serves as the Co-Chair of the annual CDOIQ European Symposium, which brings together CDOs, CAOs, CAIOs, and senior leaders shaping the data, analytics, and AI landscape in Europe.